Affective State Detection

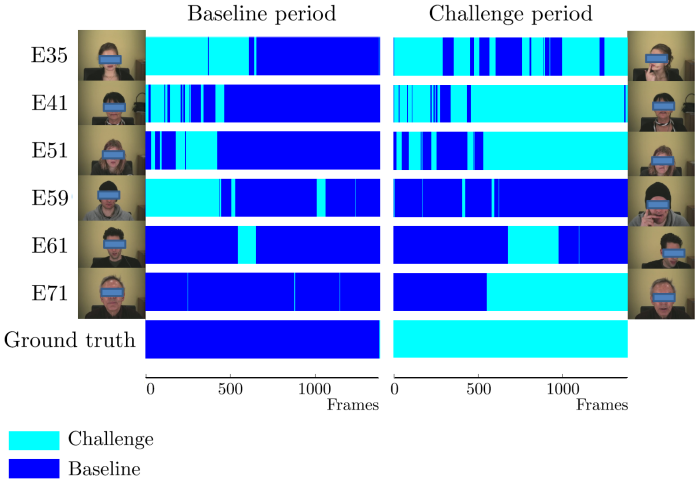

One development is the estimation of the affective state of the user in a Human Machine Interaction in order to control the strategy in a dialog. In this way, the machine could be able to adapt to the skills of the user. Different modalities, such as audio, video and physiological signals can be used to obtain features in order to derive an estimation of the affective state of the user which could be relevant for his disposition. In the figure, a time window of features, generated out of facial features (action units), prosodic inputs and gestures is used to estimate whether the test persons are in a relaxed (baseline) or stressed (challenge) affective state. Investigations showed that quite simple classifier architectures, such as linear filter classifiers, provide good results if the input information is supplied in a good way. Experiments have been performed with subjects of different age, gender and educational background.

[85] Axel Panning, Ingo Siegert, Ayoub Al-Hamadi, Andreas Wendemuth, Dietmar Rösner, Jörg Frommer, Gerald Krell, and Bernd Michaelis. Multimodal Affect Recognition in Spontaneous HCI Environment. In Proceedings of the IEEE International Conference on Signal Processing, Communications and Computing (ICSPCC 2012), pages 430 - 435, 2012. [ .pdf ]

Contact: Gerald Krell